What are AI code editors?

As the name suggests, these are tools to help users write, understand or debug code using natural language. A user can be anywhere from someone who just started learning to code to an advanced programmer. Apparently, the developers of Cursor use it to build more features in Cursor. Talk about dogfooding!

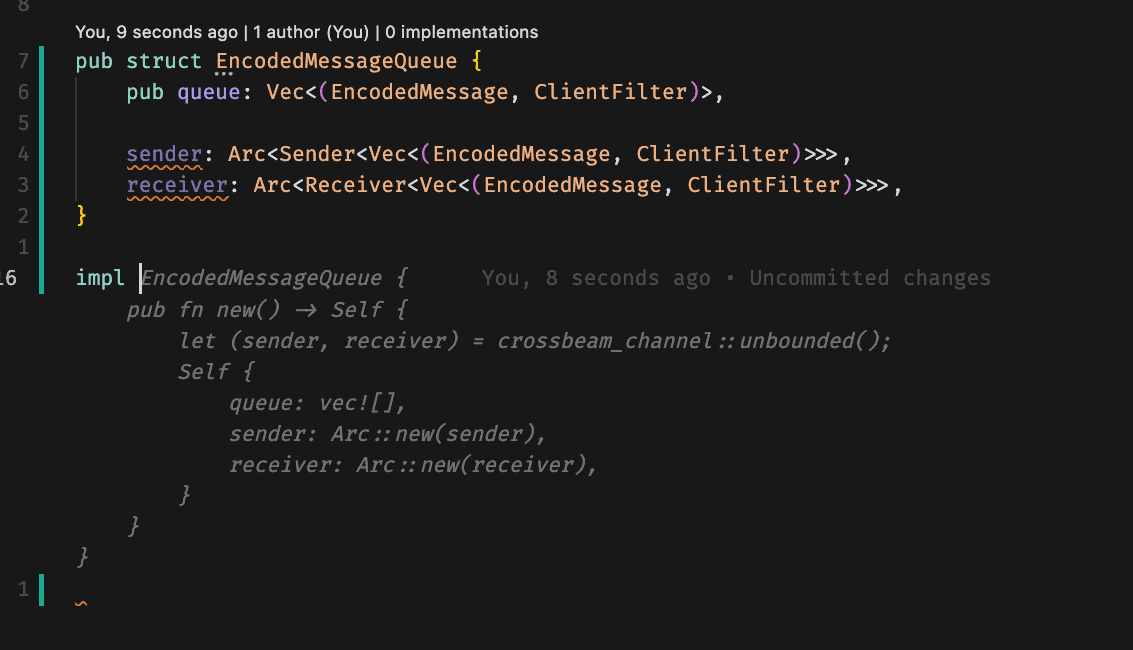

They could be in the form of an extension (Github Copilot, Codeium, Tabnine) or a fork of an existing editor (Cursor). They help in generating new code, modifying existing code and chatting to the codebase. I’ll go deeper into every functionality later.

Basic principles of an AI code editor

Every product has driving principles around it and code editors are no exception. The goal is to make the experience smooth for both beginner and advanced programmers by keeping these points into consideration -

Easy to operate - it should not take anything away from the current IDEs which the programmers are so used to. This is the main reason why most AI editors are extensions to the current famous editors.

Extremely fast - the job of a programming copilot is to help the user generate code/suggestions quickly so that they can iterate fast on whatever they are building. With every keystroke, the copilot should be able to provide the best possible suggestion the user can tab away.

Understand the user’s intentions - apart from generating high-quality code quickly, the editor should also be able to sense the next step of a user to maintain the state of flow. One way to facilitate this is by predicting where the user would want to go next after generating/modifying a piece of code and placing the cursor there automatically.

Reduce friction - while using an AI code editor, the highs are very high and the lows are very low. Even a little bit of friction is annoying. It becomes critical that any new feature added should 99% of the time make the life of a user easier.

Features

There are some amazing features of AI code editors that have normalized their usage in the programming community in a very short time. I am gonna cover the functionality of those features and the engineering practices behind them to make them work.

Tab (auto-completion)

This is probably the most used feature of an AI code editor. It could be thought of as a really fast programmer looking over your shoulder and suggesting/typing for you. The editor suggests a piece of code where the cursor is based on the lines written around it. It’s up to the user to accept that suggestion (by pressing tab) or keep writing. Every keystroke provides better context to the editor about the user’s intentions and hence the suggestions also change (ideally, become better) as the user types.

Engineering

The biggest engineering consideration while designing this feature has to be latency. The editor should be able to provide suggestions in real time as the user is typing. Delays in these predictions will significantly degrade the experience. One more thing to note here is that bits-per-byte (loss for LLMs) for code generation is the lowest among all the use cases of LLMs. The reason for this is code generation follows a very strict grammar (syntax) so a lot of times the next token is quite predictable.

These models also take in a huge prompt with a lot of context (code in the file and from other files) and produce a limited amount of tokens - sparsity.

Given the requirements above, small, custom fine-tuned models do really well in this use case compared to frontier models. Also, due to the sparsity of this application MoE is a good fit.

The model should be fine-tuned on the Fill-in-Middle (FIM) task. During inference, the cursor can have code written both before and after it. The model should be able to predict with context given the format of <prefix>…</prefix><suffix>…</suffix>.

Every incremental keystroke from the user should result in a quick prediction - screams prompt caching to me! Without prompt caching, the latency is going to be horrible and it would put a lot of load on the GPUs. Additionally, KV caching is also a must to process the prompts swiftly.

To keep the memory footprint of the KV cache in check, something like multi-query attention also comes into play.

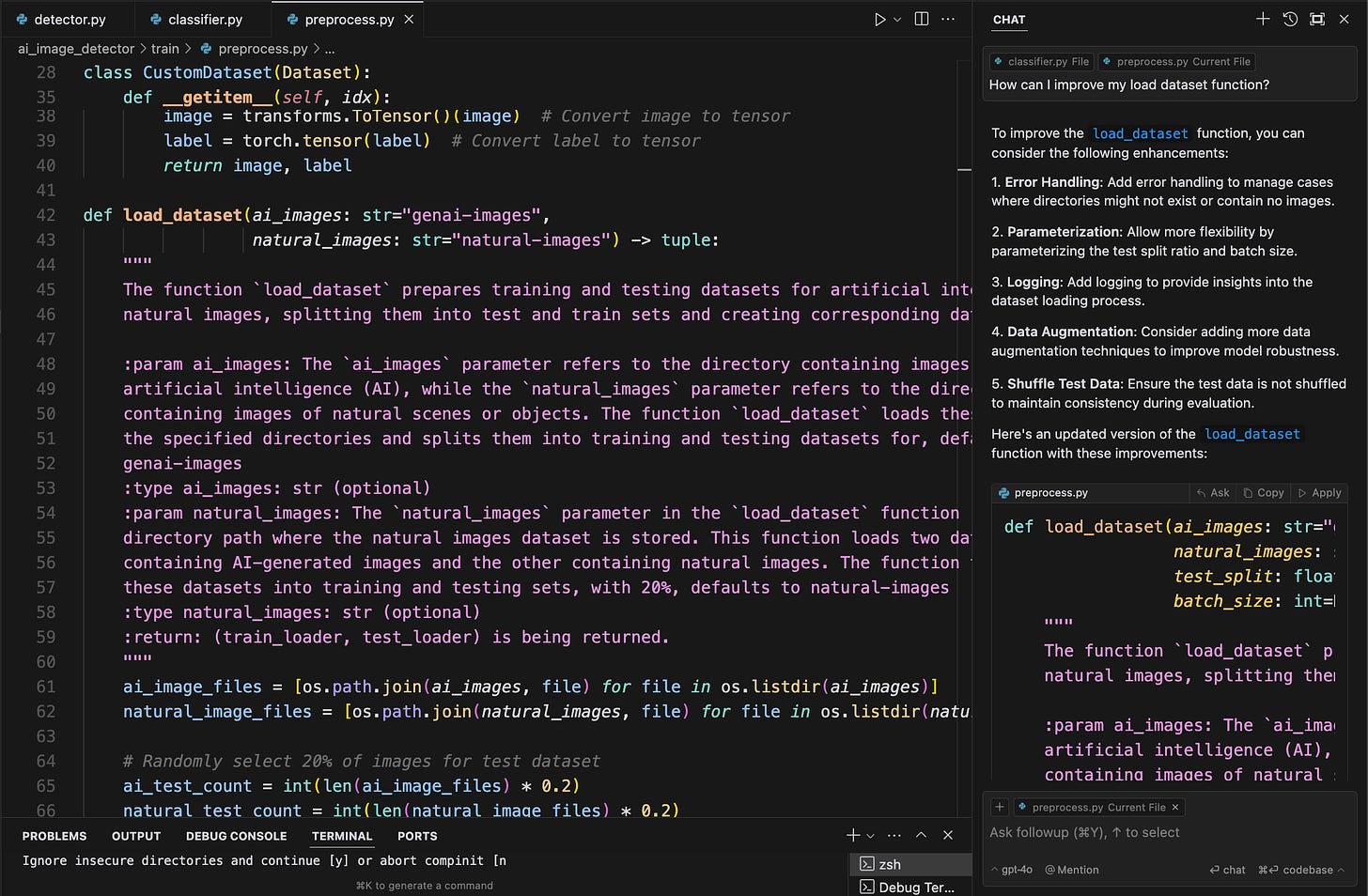

Chat with a codebase

Most times, the user will be working on a collaborative codebase where multiple developers contribute. The ability to ask questions where the user doesn’t know which specific file/function to reference can come in handy. The chat functionality can answer questions both in terms of natural language and code. As you might have already guessed, context awareness is super critical here. The model answering the question should be provided with high-quality context to produce a high-quality response. Given that there could be so much that can be passed as context, determining what’s best and handling it efficiently becomes the focus.

Engineering

As mentioned above, context awareness is important for this functionality. This means that the whole codebase (which could be a huge enterprise base as well) of the user should be chunked up, embedded and stored in a database to retrieve from. The database has to be maintained remotely as storing it locally might choke up the user’s compute and memory (can’t expect everyone to use an M3 macbook pro :])

Making sure that the remote database is up to date with the local code base can be a major network overhead on the user side if not done efficiently. It can also overload the remote database with terabytes of read-and-write operations. One solution that Cursor implemented is using Merkel Tree to sync the databases. It allows hierarchical reconciliation rather than checking the hash for each file every time.

The bar for the quality of context provided to the LLM should be kept high to produce a high-quality response. The LLMs can take a lot of tokens in the prompt but the performance decreases with a large context. It becomes essential that the context provided to an LLM is rich in information and short. In Codeium both autocompletion and chat share the same context generation mechanism but with different context lengths.

In Cursor, every query can have around 500k tokens of context - the code and the metadata. This context is passed through a re-ranker to get the most important 8k tokens and pass them in the prompt. This length can vary for different features (chat vs tab).

There’s always a consideration of finetuning a model on an enterprise’s data for better performance. Arguably, context baked in a model’s weights performs better than passing that context as a prompt. But there’s also the fear of degradation of general coding capabilities. Sometimes not every developer has access to all the code in an enterprise, finetuning can act as a jailbreak in that situation. The codebase in a company changes quickly so a frequent refresh of the model is required to keep up.

Apart from these limitations, Codeium believes that finetuning can be better than using generic models. This blog explains it well.

Other ML/Engineering Aspects

Apply - as counterintuitive as it sounds, the apply functionality (merging the code from chat to the file) is not a deterministic algorithm. Even the frontier models mess up the line numbers often. The Cursor team has built a custom fine-tuned model just for this application. They use the frontier models for generating the plan and use the smaller models for applying.

Context pinning - a user can provide the scope of their work - a collection of files, folders or repositories. This way the editor can generate recommendations just from this scope which results in a better experience.

RAG vs Context Awareness - context awareness is RAG plus a lot of context - which file is open, where the cursor is, chat history, etc. It encapsulated the user’s intentions as well.

Code parsing - leveraging the structured nature of code (functions, classes, files, etc.) helps identify the blocks to embed and index individually. At retrieval, one can run a lot of smart logic to pull context from several different sources - not just the current and open tabs, but also files in the same directory, files referenced in important statements, and of course, the full repository embedding-based index.

Evals

Any software system needs evaluation metrics to improve over time. For an AI code editor, it is important to evaluate both individual models and the overall system (model + context engine).

Fill-In-Middle models

Evaluating a model against HumanEval might not be the best idea. It has very simple functions with the functionality explained in the doc string. That’s not how people usually code. Also, the functions on which the evals are running are probably learned by the model.

A better way could be creating a collection of functions from the web that has unit tests. Delete the said functions and re-generate them with the new model. Run the tests and get the results. This way one could compare the accuracy as well as the latency of the generations.

Codeium also follows the following when they train a new model

Pre-merge evals - to make sure nothing obvious is breaking.

Internal dogfooding - ask the team internally to try it out and look out for major degradations

Beta testing - let some loyal users try it out (discord members)

A/B testing - roll out to some users and see if the acceptance rate is better or worse

End-to-end metrics

Apart from evaluating the individual models, measuring how the overall system works is crucial in understanding the business value of a software system. To deliver maximum value to the customers, the metrics should be designed keeping all factors in mind to avoid any smokescreens. Codeium uses these metrics -

Character per opportunity (CPO)

Characters per Opportunity =

Attempt rate *

Feedback rate *

Acceptance rate *

(Avg Num Tokens / Acceptance) *

(Avg Num Characters / Token)Attempt rate: how many times the AI even tries to make a prediction. Reasons for not attempting - latency or contextual filters

feedback rate: what percentage of suggestions reach the developer. This captures the ability of a coding assistant to quickly present a suggestion to the developer after context building and model inference.

Acceptance rate: fraction of suggestions shown to the developer that are accepted

Avg num_tokens/acceptance: the value the suggestion is adding to the developer

Avg num_chars/acceptance: same as above but in terms of characters

Percentage Code Written

As the name suggests, it measures how much code in a codebase was generated by AI. Gives a sense of the overall value added to a developer’s life.

Latency

Here’s a picture of what goes on behind the scenes with each keystroke -

Keystroke → logic to collect context for that text → context is passed over the network to a powerful GPU for inference → inference result is passed back over to the client → some logic runs to show it in the editor.

Every operation in the above flow has to be super quick to present a suggestion to the user in no time! Using a third-party API and not your own inference engine and hardware management can add a lot to latency and costs. The third-party API can have rate limits and scheduling latencies as well. Here are some practices to keep things in check -

Batch processing - should be able to process inputs of variable lengths - padding

Quantization - use lower precision weights to make inference faster and load the model into memory faster due to smaller size.

Speculative decoding can speed up inference by generating future tokens using a draft model.

Context caching - while a developer is working in a file the context can be cached for faster retrieval as context doesn’t change much from keystroke to keystroke.

I’ve packed a lot of information here! It’s such an exciting space with a huge impact on the tech community. Learning about the cutting-edge engineering that goes behind products like this is both humbling and inspiring. Most of the above information is referenced from this amazing podcast of the Cursor team with Lex and ~20 tech blogs from Codeium.